- in Research by Cesar Alvarez

ConnorsRSI Strategy: Sensitivity Analysis

In Simple ConnorsRSI Strategy on S&P500 Stocks I showed a ConnorsRSI strategy on S&P500 stocks. In ConnorsRSI Strategy: Optimization Selection, I narrowed down the optimization to three potential variations that one could consider trading. This post will explore Sensitivity Analysis (also known as: Parameter Sensitivity) to help guide us on what to expect from each variation.

Definition

This is my definition on Parameter Sensitivity. This is not the formal definition.

Any strategy has various inputs that one may have optimized on. The idea is to vary a set of these inputs in “small” amounts to determine how much the strategy results change. Does changing a parameter slightly have a large change in the results? The focus should be on the statistics that one used to select the variations, which in my case were Compounded Annual Return (CAR) and Maximum Drawdown (MDD).

What I do is randomly vary the inputs by 10-20% around the parameter value. Why 10-20%? I think this is a reasonable amount of noise around most parameters. Each person must determine how much noise they will test. The more noise you add, the more I would expect the strategy to deteriorate. If it did not, then that would imply that the rule is not adding anything to the strategy and should not be there to begin with. Picking too small of amount for noise can mislead one into thinking all is good.

Once I have run the tests with the added noise, I can then look at the results and see if my selected variation is sitting on peak. Meaning does small changes in the parameters “drastically” change the CAR and MDD. Each person will need to define what “drastically” is.

One Variable

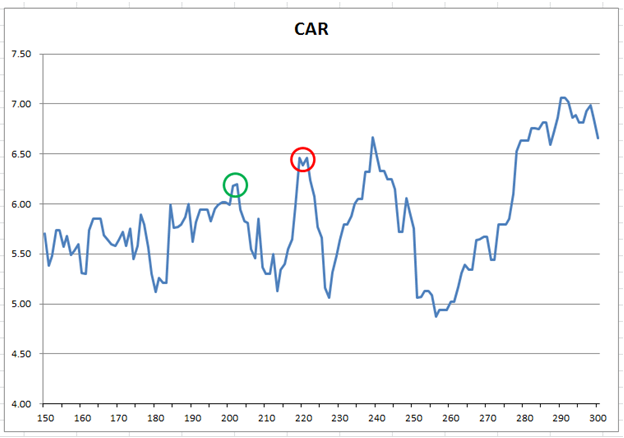

When the strategy only has a few variables it is possible simply to test them all out. Take this simple strategy on the SPY. Buy when the close is greater than 220 day moving average. Sell when it is below it. Say we originally came to this number by doing an optimization in steps of 20 from 140 to 300. Here are the results all lengths of the moving average from 50 to 100.

You can see peaks at 200, 220 and 240. The original test in steps of 20 would lead one to think all was OK. But look at the red circle at 220. It is sitting on a peak. At 3% change in the 220 value gets you to a trough with a very different return. This sitting on a peak is what we are trying to avoid.

Two Variables

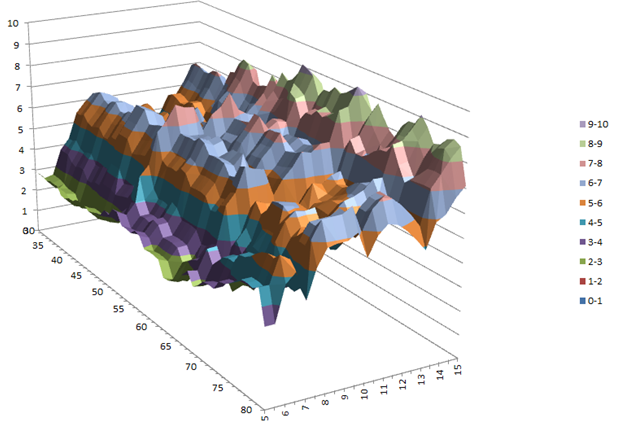

One can also produce a chart the CAR with two variables but it is much harder to read. Here are the results of Buying SPY when RSI(2) is less than X and exiting when RSI(2) > Y.

It is much harder to see if a pair of values is near a steep peak or not.

More than Two Variables

Highest CAR

How do I handle more variables? Using Excel of course. For the Connors RSI strategy variation with the highest CAR, run 934, I do the following

- 39 week high: Randomly choose value between 34 and 44. Even though I did not optimize on this value, I want to test the sensitivity of it

- The weekly high happened in the last 25 days: Randomly choose values between 20 and 30.

- ConnorsRSI less than 20.0: Randomly choose values 20% higher and lower. These need not be integer values. For example the value can be, 21.132

- Enter on 1.5% limit move down: Randomly choose values 20% higher and lower.

- Exit when ConnorsRSI is above 65. Randomly choose values 20% higher and lower.

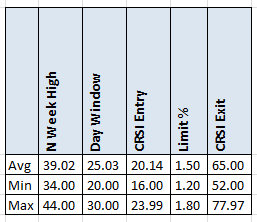

Given the above, I pick my new random values in the given range for each parameter and run a backtest. I do this 1000 times to generate a large set of tests with random noise in each the parameters. The table below shows the range and average of each the parameters after the 1000 runs.

We can see that the average value is very close to equal to the base value, which is what we want. We also see that the min and max values are what we expect.

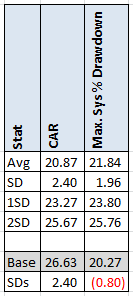

From these 1000 runs, I compute the following for CAR and MDD: Average, standard deviation, # standard deviations average CAR is from our base run, and # standard deviations average MDD is from our base run.

The average CAR of our 1000 runs is 20.87 and average MDD is 21.84 with a standard deviation of 2.40 and 1.96 respectively. How does this compare to the base variation with no noise? Our base run had a CAR of 26.63 which is 2.40 standard deviations above the average. Ideally I like to see this number under 1 standard deviation. Between 1 and 2 is a grey area. Above 2, then I believe is variation is on a pick and should be discarded. Now the MDD is under the average which is a good sign. This is why one should not gravitate to the highest value. You are likely sitting on or near a peak.

Ulcer Index

This how the parameters will be varied for the Ulcer Index variation, run 673, I do the following

- 39 week high: Randomly choose value between 34 and 44.

- The weekly high happened in the last 20 days: Randomly choose values between 15 and 25.

- ConnorsRSI less than 20: Randomly choose values 20% higher and lower. These need not be integer values.

- Enter on 1.5% limit move down: Randomly choose values 20% higher and lower.

- Exit when ConnorsRSI is above 60. Randomly choose values 20% higher and lower.

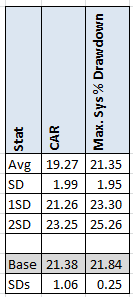

Results after 1000 runs.

Here, the base CAR is 1.06 standard deviations above the average. Barely into my grey zone. While the MDD is about the same. One can see that adding noise had no large impact on the results.

Histogram Method

This how the parameters will be varied for the Ulcer Index variation, run 678, I do the following

- 39 week high: Randomly choose value between 34 and 44.

- The weekly high happened in the last 20 days: Randomly choose values between 15 and 25.

- ConnorsRSI less than 22.5: Randomly choose values 20% higher and lower. These need not be integer values. For example the value can be, 21.132

- Enter on 1.5% limit move down: Randomly choose values 20% higher and lower.

- Exit when ConnorsRSI is above 60. Randomly choose values 20% higher and lower.

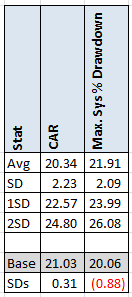

Results after 1000 runs.

The average CAR of the Histogram Method is higher than the Ulcer Index Method CAR but the standard deviation is lower. The base CAR is now only .31 standard deviations above the average of the 1000 runs. This is good, definitely not on a peak. The MDD is .88 standard deviations. Still under one. This has a chance of performing well going forward.

AmiBroker Code

I will be providing the code to do the Parameter Sensitivity in AmiBroker. The code is not meant for beginners. The code does the analysis on a simple RSI strategy. To get the code fill in the form below.

Spreadsheet

Fill the form below to get the spreadsheet with the results for the runs and the calculations. You can then apply these to any other columns that you find important.

Final Thoughts

As we can see, using a parameter sensitivity analysis can help us determine if we have picked a variation on a peak. Does not being on or near a peak mean that our strategy will work going forward? No it does not. We are trying to put the odds in our favor by avoiding those peaks. The only ‘real’ test of a strategy is what it does in real trading. No amount of testing or analysis will give us that answer.

Backtesting platform used: AmiBroker. Data provider: Norgate Data

Good quant trading,

Fill in for free spreadsheet:

![]()